AI Breakthrough Enables Real-Time Decoding of Inner Speech

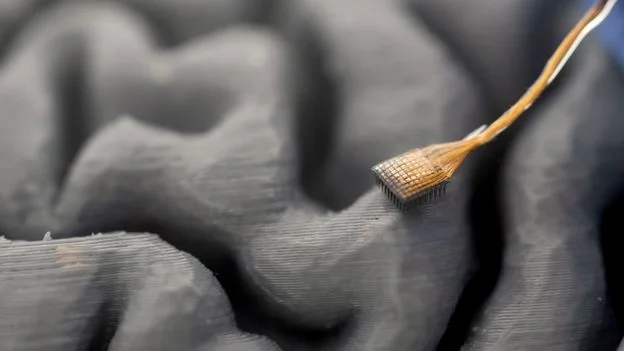

Stanford and UC Davis researchers are using implanted microelectrodes and AI to translate brain signals into text as people imagine speaking, achieving up to 74% accuracy for inner speech in real time. This marks a step toward mind-reading-like communication for patients with severe motor impairments, building on earlier work that decoded attempted speech and other neural signals. While promising, the tech remains imperfect and will require more neurons and broader brain-area sampling before widespread use or commercialization, which researchers and companies are pursuing with ongoing ethical considerations.