Maia 200 Pushes Cloud AI In-House, But Nvidia Keeps the Data Center Edge

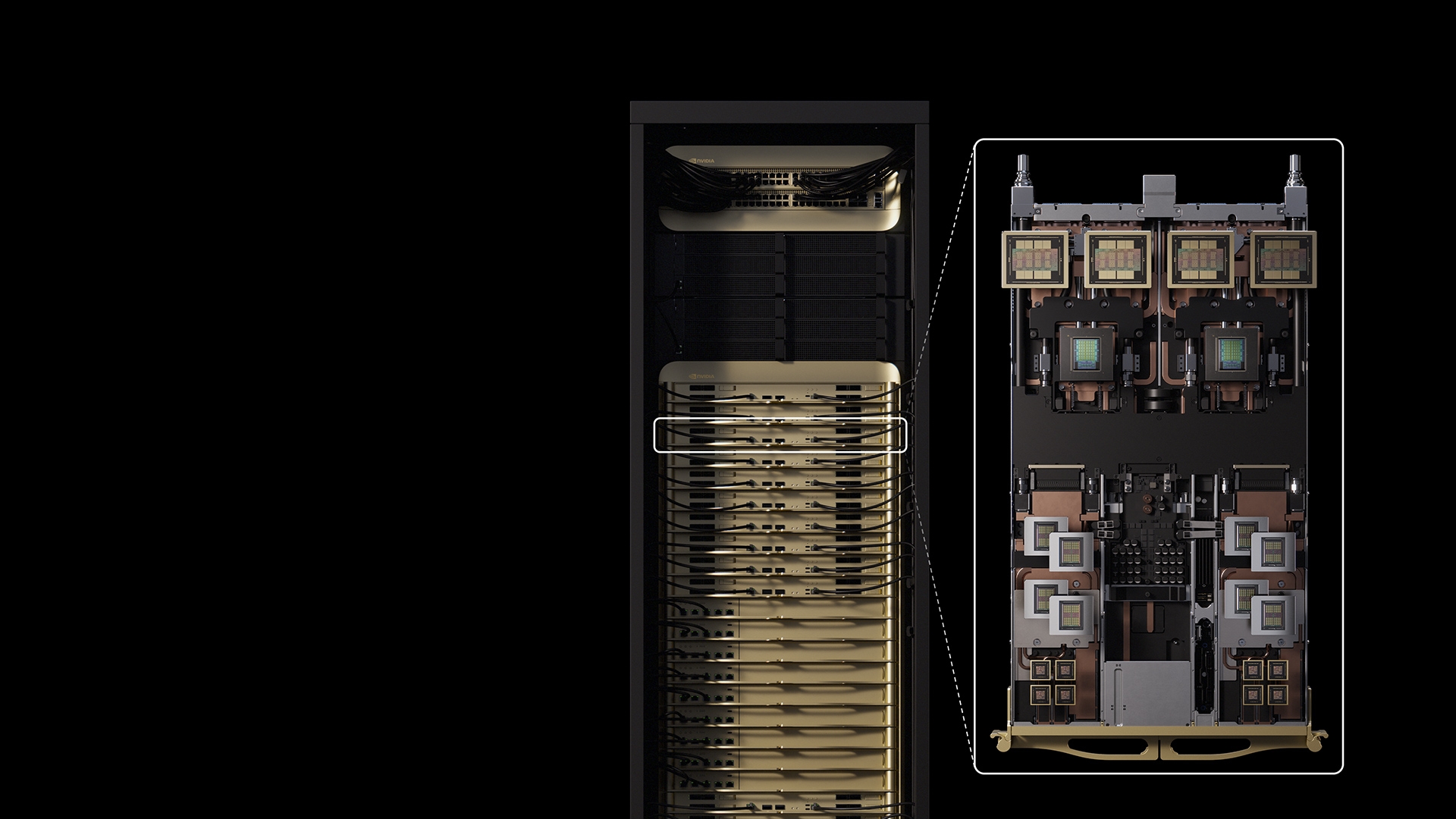

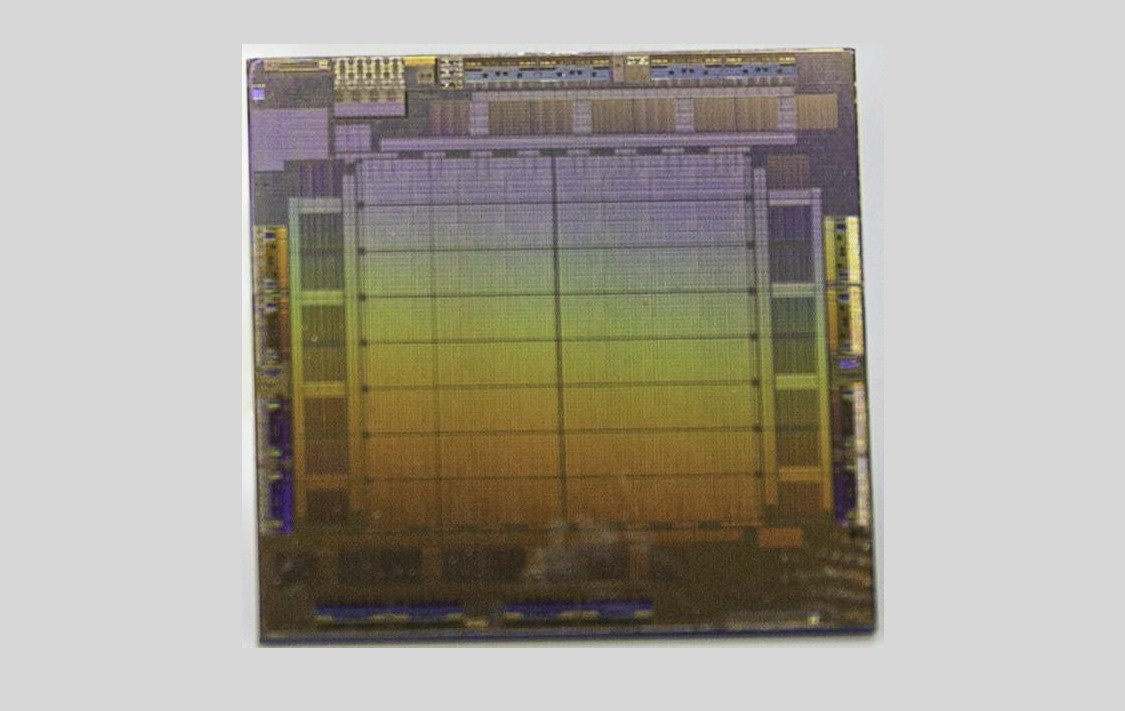

Microsoft’s Maia 200 is an in‑house AI inference accelerator for Azure that claims strong performance per dollar and will power OpenAI models, signaling rising cloud‑provider pressure on Nvidia. While Maia 200 underscores a shift toward custom silicon, Nvidia still leads the data‑center AI market with its broad GPU ecosystem and software stack, and though cloud‑provider alternatives may erode pricing power over time, a rapid disruption to Nvidia’s position appears unlikely, even as valuations remain rich given AI growth.