Maia 200 Boost for MSFT Fails to Lift Stock Amid Xbox Shakeups

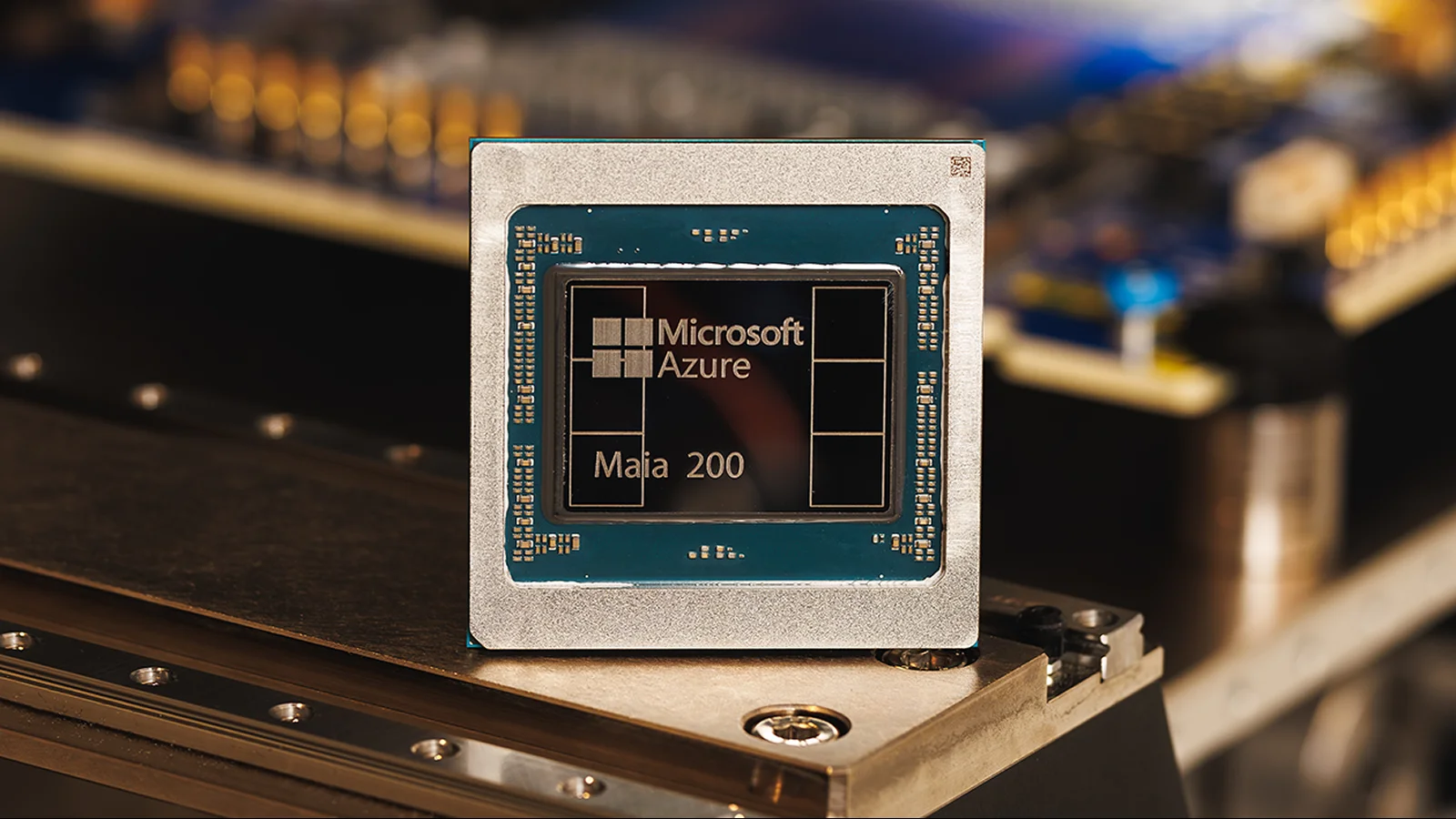

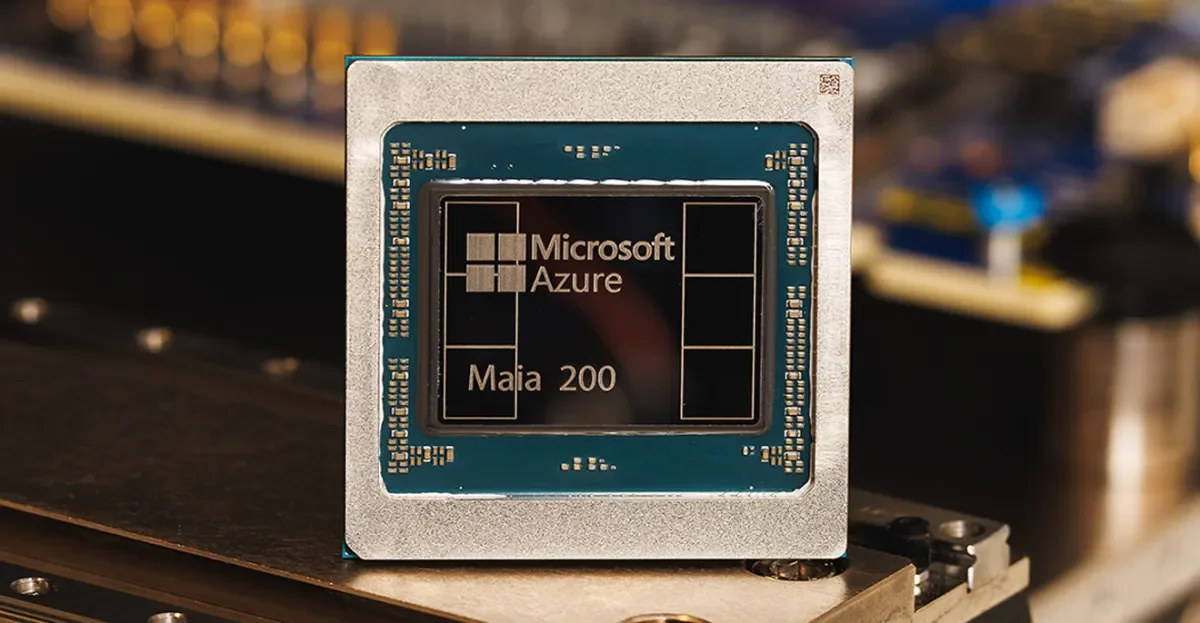

Goldman Sachs maintains a Buy on Microsoft after the Maia 200 AI inference accelerator is unveiled, praising AI compute advances and keeping a $600 target, even as MSFT shares slip about 2.5% in trading. The Maia 200’s parity with competitors helps MSFT’s AI compute margins narrative, while Xbox leadership shifts (Phil Spencer’s retirement; Asha Sharma’s ascent; Sarah Bond’s departure; Matt Booty’s promotion) signal internal reorganization. Analysts remain bullish with a Strong Buy consensus and an average target around $594, implying roughly 53% upside.