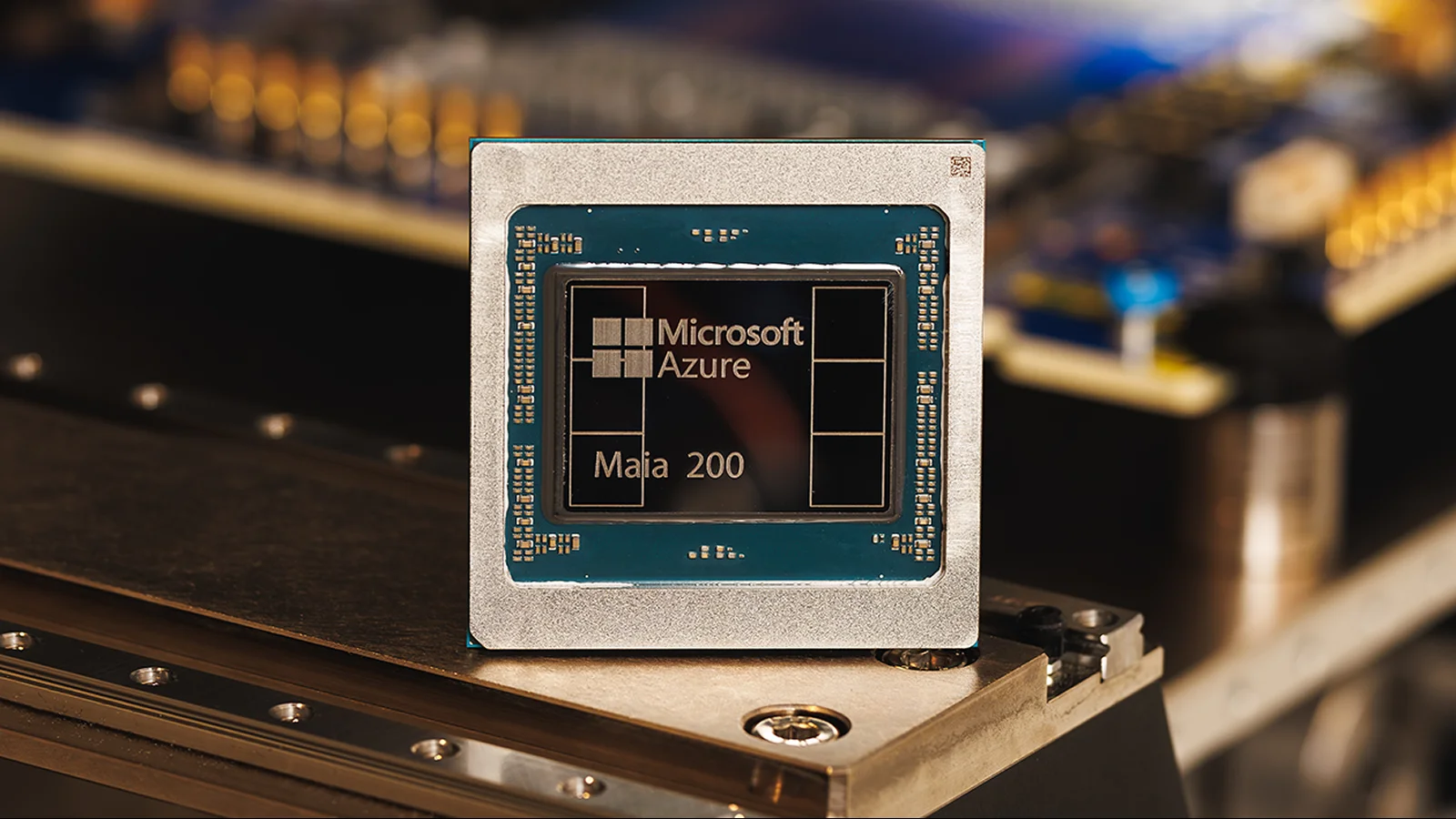

Maia 200 AI chip promises threefold FP4 power, edging out TPU and Trainium in inference

Microsoft unveiled Maia 200, an AI inference accelerator for Azure, claiming it delivers over 10 petaflops at FP4 and 5 PFLOPS at FP8, with 3x FP4 performance versus Amazon’s Trainium Gen3 and FP8 performance above Google’s TPU Gen7. Built on TSMC’s 3-nanometer process with about 100 billion transistors, Maia 200 is designed for data-center inference to speed Copilot and Azure OpenAI workloads, featuring a memory system to keep model weights local and is currently deployed in a US data center with broader Azure availability planned in the future.