Maia 200 AI chip promises threefold FP4 power, edging out TPU and Trainium in inference

TL;DR Summary

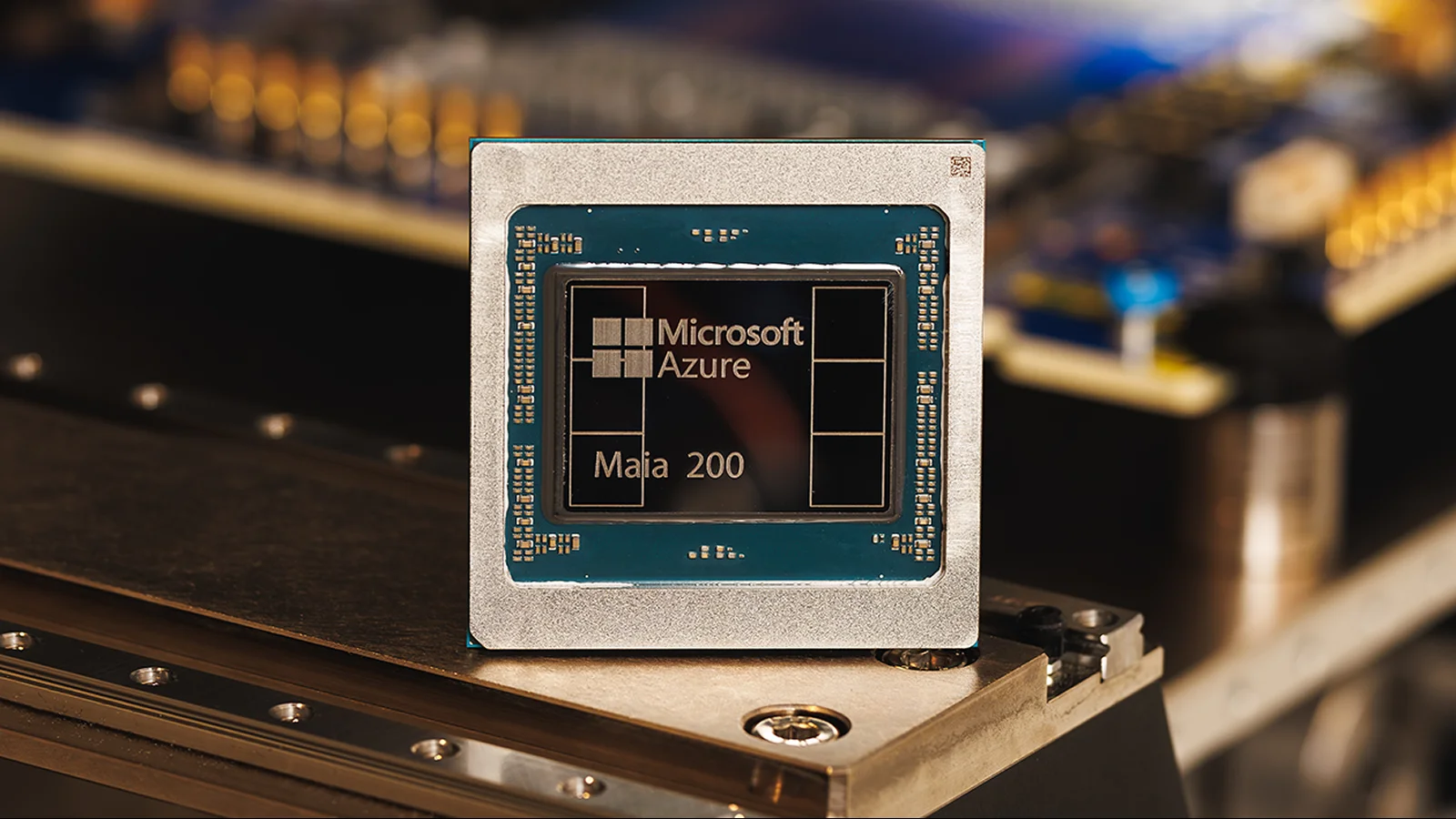

Microsoft unveiled Maia 200, an AI inference accelerator for Azure, claiming it delivers over 10 petaflops at FP4 and 5 PFLOPS at FP8, with 3x FP4 performance versus Amazon’s Trainium Gen3 and FP8 performance above Google’s TPU Gen7. Built on TSMC’s 3-nanometer process with about 100 billion transistors, Maia 200 is designed for data-center inference to speed Copilot and Azure OpenAI workloads, featuring a memory system to keep model weights local and is currently deployed in a US data center with broader Azure availability planned in the future.

- Microsoft says its newest AI chip Maia 200 is 3 times more powerful than Google's TPU and Amazon's Trainium processor Live Science

- Microsoft takes aim at Google, Amazon, and Nvidia with new AI chip Yahoo Finance

- Maia 200: The AI accelerator built for inference The Official Microsoft Blog

- Microsoft rolls out next generation of its AI chips, takes aim at Nvidia's software Reuters

- Microsoft Unveils Latest AI Chip to Reduce Reliance on Nvidia Bloomberg.com

Reading Insights

Total Reads

0

Unique Readers

15

Time Saved

55 min

vs 56 min read

Condensed

99%

11,152 → 88 words

Want the full story? Read the original article

Read on Live Science