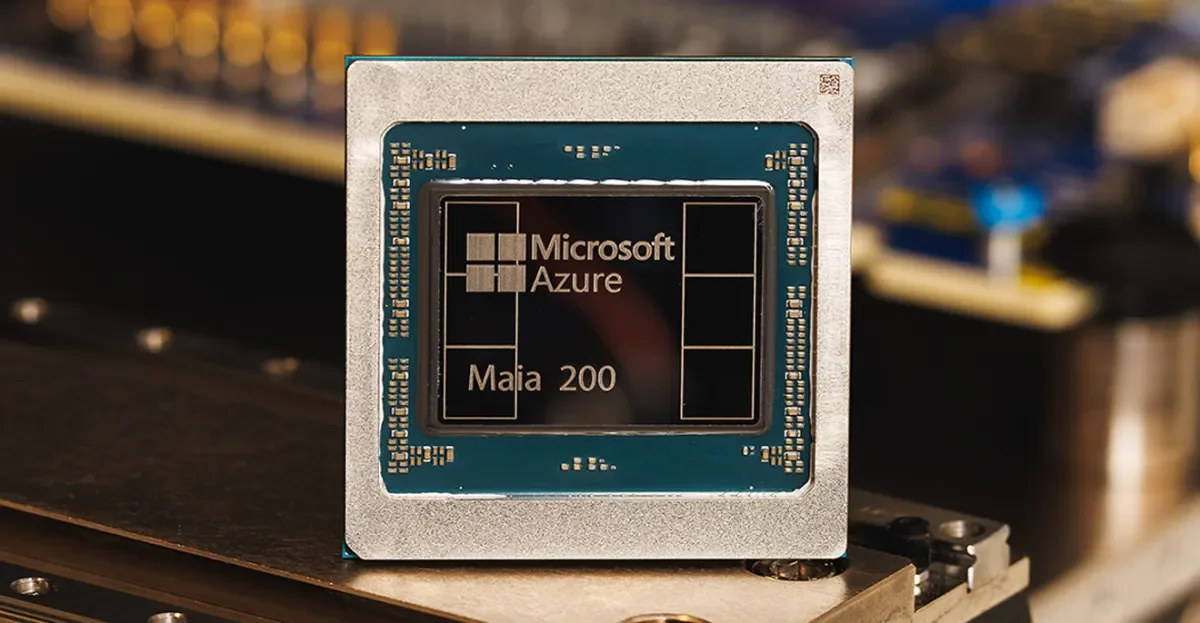

Maia 200: Microsoft’s 3nm AI accelerator aims to outrun rivals

TL;DR Summary

Microsoft unveiled Maia 200, its next‑gen AI accelerator built on TSMC’s 3nm process with 100+ billion transistors, designed to host OpenAI’s GPT‑5.2 in Foundry and Microsoft 365 Copilot. Microsoft claims Maia 200 delivers about 3× the FP4 performance of Amazon’s Trainium Gen 3 and FP8 performance above Google’s TPU, while also offering roughly 30% better performance per dollar than its current hardware. Deployment starts today in Azure US Central with more regions to follow, and an early SDK preview will be available to researchers, developers, labs, and open‑source contributors as Microsoft and rivals push into next‑gen AI chips.

- Microsoft’s latest AI chip goes head-to-head with Amazon and Google The Verge

- Microsoft reveals second generation of its AI chip in effort to bolster cloud business CNBC

- Maia 200: The AI accelerator built for inference The Official Microsoft Blog

- Microsoft Unveils Latest AI Chip to Reduce Reliance on Nvidia Bloomberg

- Microsoft announces powerful new chip for AI inference TechCrunch

Reading Insights

Total Reads

1

Unique Readers

14

Time Saved

2 min

vs 3 min read

Condensed

79%

456 → 98 words

Want the full story? Read the original article

Read on The Verge