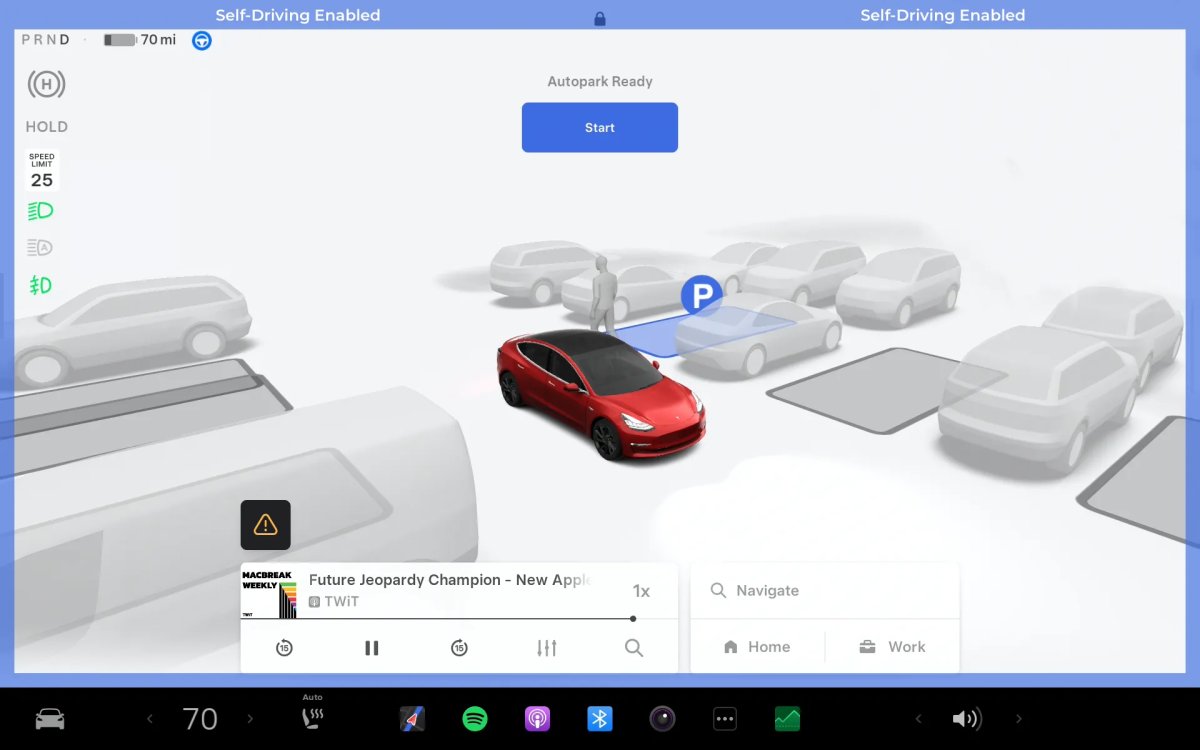

Tesla's Vision-First Autonomy: Cameras Over Radar and LiDAR

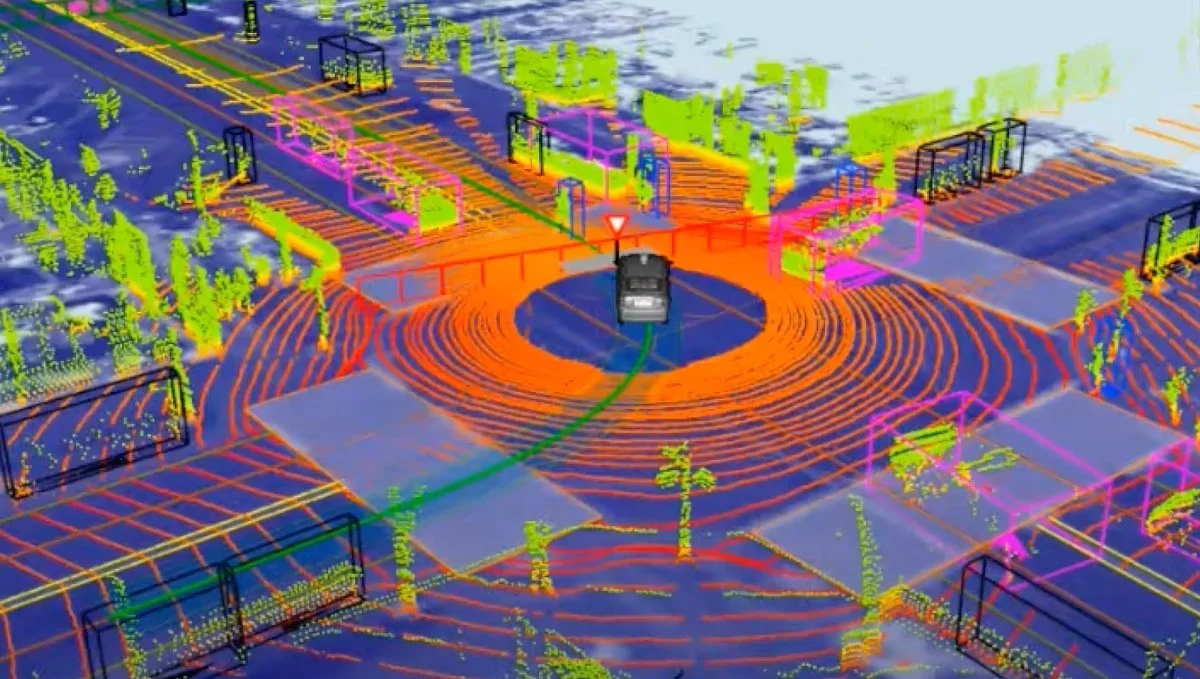

Tesla has doubled down on a vision-only approach, removing radar and relying on eight cameras and a neural-network world model to drive autonomy. The company argues sensor fusion with radar/LiDAR creates conflicting data that can undermine safety, a stance it has pursued since 2021, with radar still present on some cars but not used for FSD. Tesla trains depth and velocity from vast camera data using ground-truth measurements from auxiliary sensors, and uses a foveated processing approach to keep compute scalable by focusing high-res on distant “priority” regions while downsampling the rest. The gamble aims for cheaper, scalable autonomy, contrasting with rivals’ sensor-fusion stacks.