Trying a free, local coding AI stack: Goose, Ollama, and Qwen3-coder

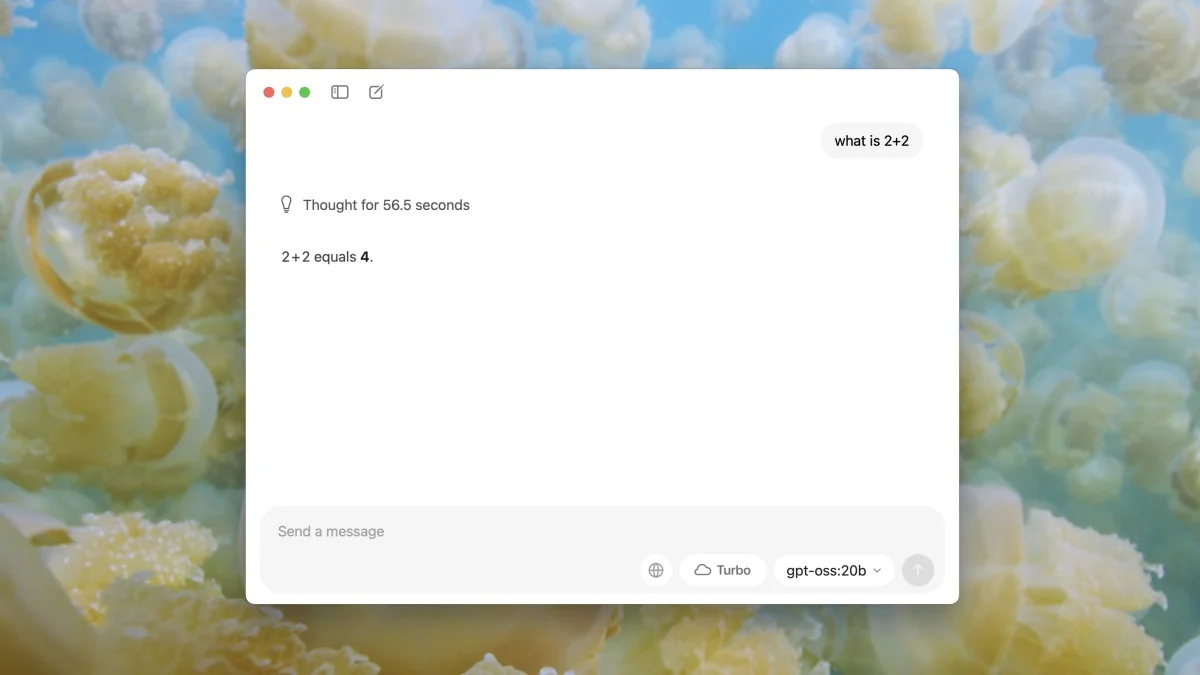

A ZDNET tester explores a fully local, free coding AI stack using Goose (agent framework), Ollama (LLM server), and Qwen3-coder, detailing installation, a sample WordPress plugin test, and notes that while the local setup runs on a powerful Mac with 128GB RAM and can be competitive with cloud options, early results show accuracy issues and multiple retries; it's promising but not yet ready to fully replace Claude Code or Codex.