Google's TurboQuant Slashes LLM Memory 6x Without Sacrificing Output

TL;DR Summary

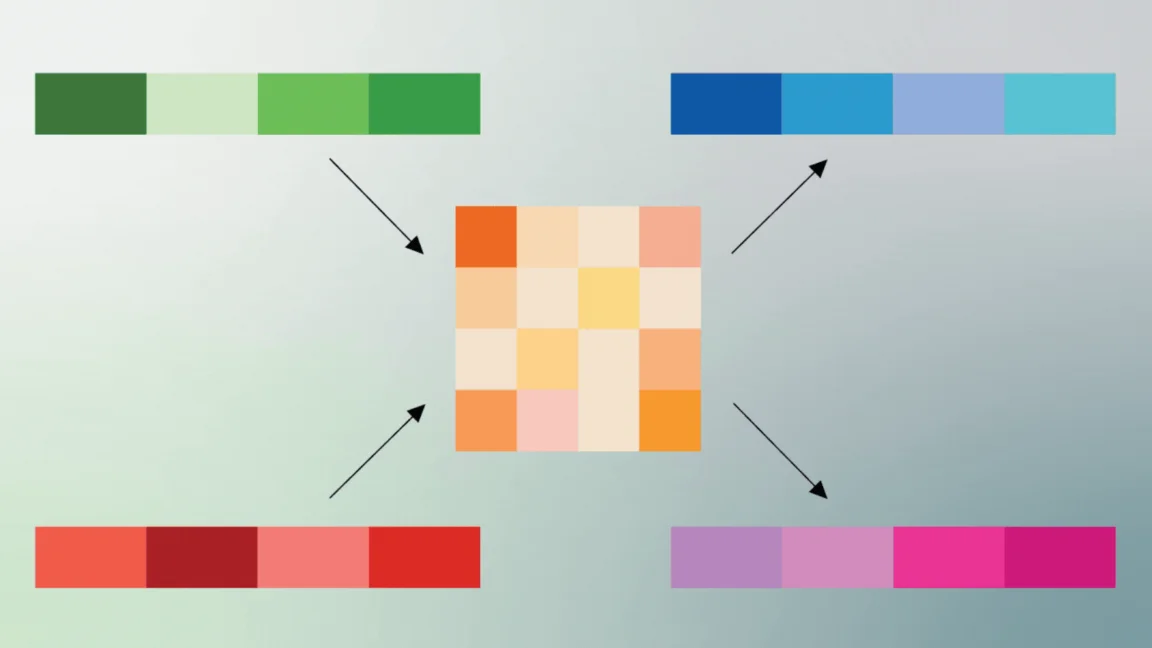

Google Research's TurboQuant uses PolarQuant and Quantized Johnson-Lindenstrauss (QJL) to compress the LLM key-value cache, quantizing to as little as 3 bits with no retraining and delivering up to 6x memory reduction and up to 8x faster attention logits at 4-bit, with perfect downstream results in tests on Gemma and Mistral.

- Google’s TurboQuant AI-compression algorithm can reduce LLM memory usage by 6x Ars Technica

- Chip Selloff Deepens After Google Touts Memory Breakthrough Yahoo Finance

- Google’s TurboQuant Compression Could Increase Demand For AI Memory Forbes

- A Google AI breakthrough is pressuring memory chip stocks from Samsung to Micron CNBC

- Micron’s stock is dropping. Is Google partly to blame? MarketWatch

Reading Insights

Total Reads

0

Unique Readers

0

Time Saved

5 min

vs 6 min read

Condensed

95%

1,044 → 51 words

Want the full story? Read the original article

Read on Ars Technica